AMD ROCm 7.2.1: Benchmarking Hardware Parity Against Software Friction

ROCm 7.2.1 attempts to bridge the gap between AMD’s Instinct accelerators and NVIDIA’s CUDA dominance by providing an open-source stack for AI training and inference. While it now offers official supp

The Pitch

ROCm 7.2.1 attempts to bridge the gap between AMD’s Instinct accelerators and NVIDIA’s CUDA dominance by providing an open-source stack for AI training and inference. While it now offers official support for consumer RDNA 4 hardware, it remains a high-effort alternative requiring significant engineering overhead to maintain stability (UsedBy Dossier).

Under the Hood

ROCm 7.2.1, released March 25, 2026, officially supports RDNA 4 architecture and Ubuntu 24.04.4 HWE kernels (GitHub ROCm Release Notes). In the data center, the MI355X has proven competitive, trailing NVIDIA’s B200 by only single-digit percentages in the latest MLPerf Inference 6.0 benchmarks (MLPerf/EETimes). Windows support has also arrived for specific Radeon AI Pro cards, though feature parity with Linux remains incomplete (AMD Docs).

Despite these hardware wins, the developer experience is still defined by "dependency hell" and firmware instability. A major VGPR mismatch bug in January 2026 caused frequent system hangs on flagship Strix Halo silicon (YouTube/Reddit). Furthermore, the new "TheRock" build system requires packaging over 30 dependencies to achieve deterministic builds, making it a maintenance burden for small teams (HN Thread).

Software porting remains the primary bottleneck for inference latency. Porting CUDA-specific libraries, such as FlashAttention 3, currently incurs a 20-30% performance penalty in low-latency workloads (Spheron Network 2026 Benchmark). Installing ROCm still feels less like a software update and more like a hazing ritual for junior DevOps engineers.

We currently lack information on two critical fronts:

- A "Tier 1" support timeline for legacy RDNA 400/500 series cards (UsedBy Dossier).

- Long-term support guarantees for "TheRock" deterministic build system versus standard releases (UsedBy Dossier).

Marcus's Take

ROCm 7.2.1 is finally a credible threat to NVIDIA's pricing, but it is not yet a credible threat to their developer ecosystem. Unless you have a dedicated DevOps team capable of maintaining custom LLVM toolchains, the performance penalty on ported libraries makes it a poor choice for low-latency production inference. It is a viable path for hyperscalers seeking to avoid the NVIDIA tax, but for mid-sized teams, it remains a high-risk distraction.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

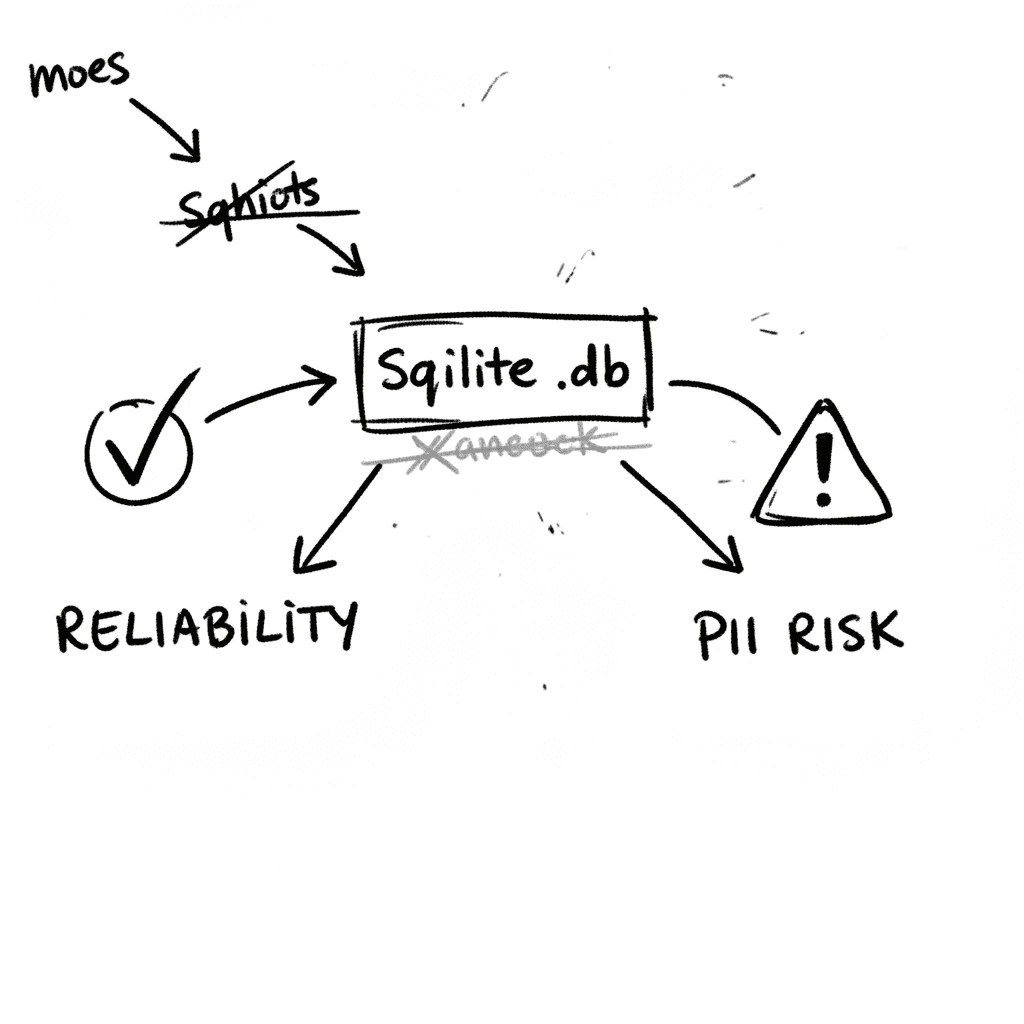

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

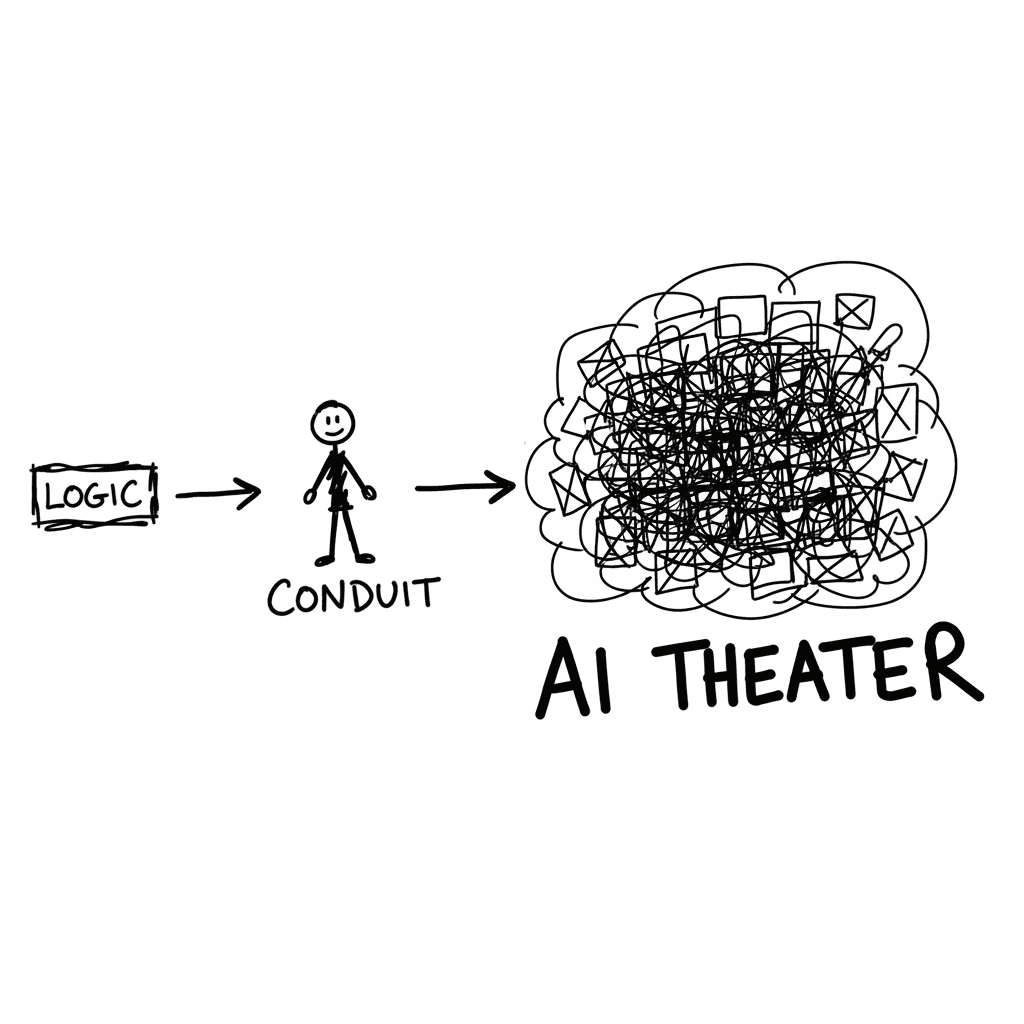

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

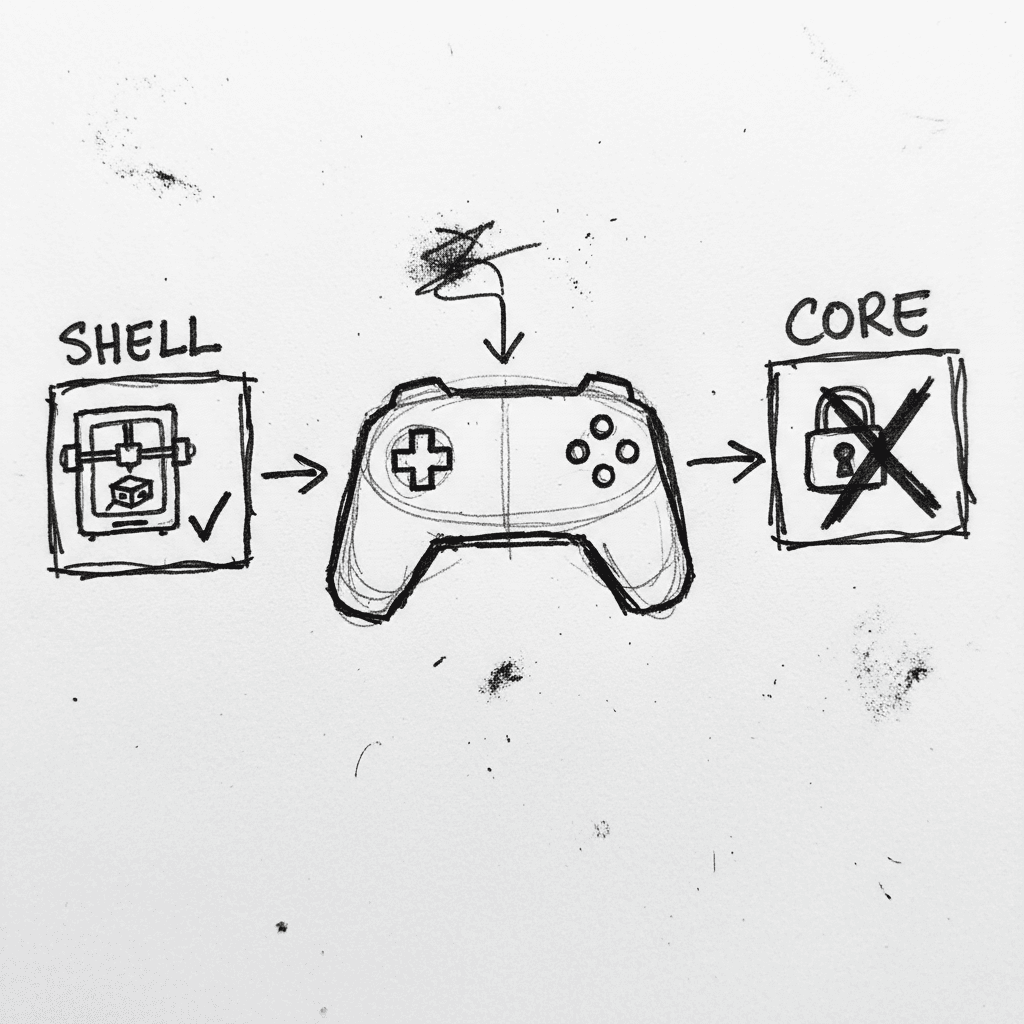

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.