Claude Code: Engineering Misfires and the 14k Token Tax

Claude Code is a terminal-based autonomous agent designed to execute multi-file refactors and complex engineering tickets using the Opus 4.7 reasoning engine. While it currently leads the SWE-bench Ve

The Pitch

Claude Code is a terminal-based autonomous agent designed to execute multi-file refactors and complex engineering tickets using the Opus 4.7 reasoning engine. While it currently leads the SWE-bench Verified leaderboard at 87.6%, its reputation has been recently marred by silent performance throttling and significant architectural overhead (Vellum.ai).

Under the Hood

The "intelligence regression" reported by users in early 2026 was not a model nerfing, but a series of engineering failures. Anthropic admitted that a March 26 caching bug caused the agent to wipe its thinking blocks every turn, resulting in repetitive loops and memory loss (Anthropic April 23 Postmortem). This was exacerbated by a silent downgrade of default reasoning effort from "high" to "medium" on March 4 to manage latency (Anthropic Engineering Blog).

Current benchmarks confirm that Claude Code v2.1.116 has reverted these changes, including a restrictive 100-word response limit that had previously degraded output quality (Lets Data Science). However, the architectural cost remains high. The system prompt for Claude Code is approximately 14,300 tokens, which is remarkably bloated compared to the 1,000 tokens used by the Pi agent (Medium / pi.dev).

This token inefficiency creates a specific financial risk for teams not on enterprise tiers. Every request starts with a massive overhead, and the caching bugs identified in March caused sequential requests to become "cache misses," effectively burning through subscription limits (HN / Reddit). Anthropic’s idea of "stealth optimization" is about as subtle as a foghorn in a library, and users are paying for the noise.

We still don't know if the 'Claude Cowork' platform suffers from the same session-clearing logic errors found in the CLI. Furthermore, details on OpenAI’s rumored "unlimited tokens" tier to counter Anthropic’s dominance remain unconfirmed (HN anecdotal). What is verified is that Opus 4.7 currently outperforms GPT-5.4 in technical reasoning, provided you can afford the "Anthropic Tax" on tokens.

Marcus's Take

Skip it for routine tasks and use a leaner wrapper like Pi or Goose to save your budget. Claude Code is only worth the token burn when you are tackling a "Tier 1" architectural mess that requires the 87.6% SWE-bench reasoning of Opus 4.7. Anthropic has proven they will silently throttle your performance to save themselves on compute latency, so monitor your logs for "reasoning effort" shifts after every minor update.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

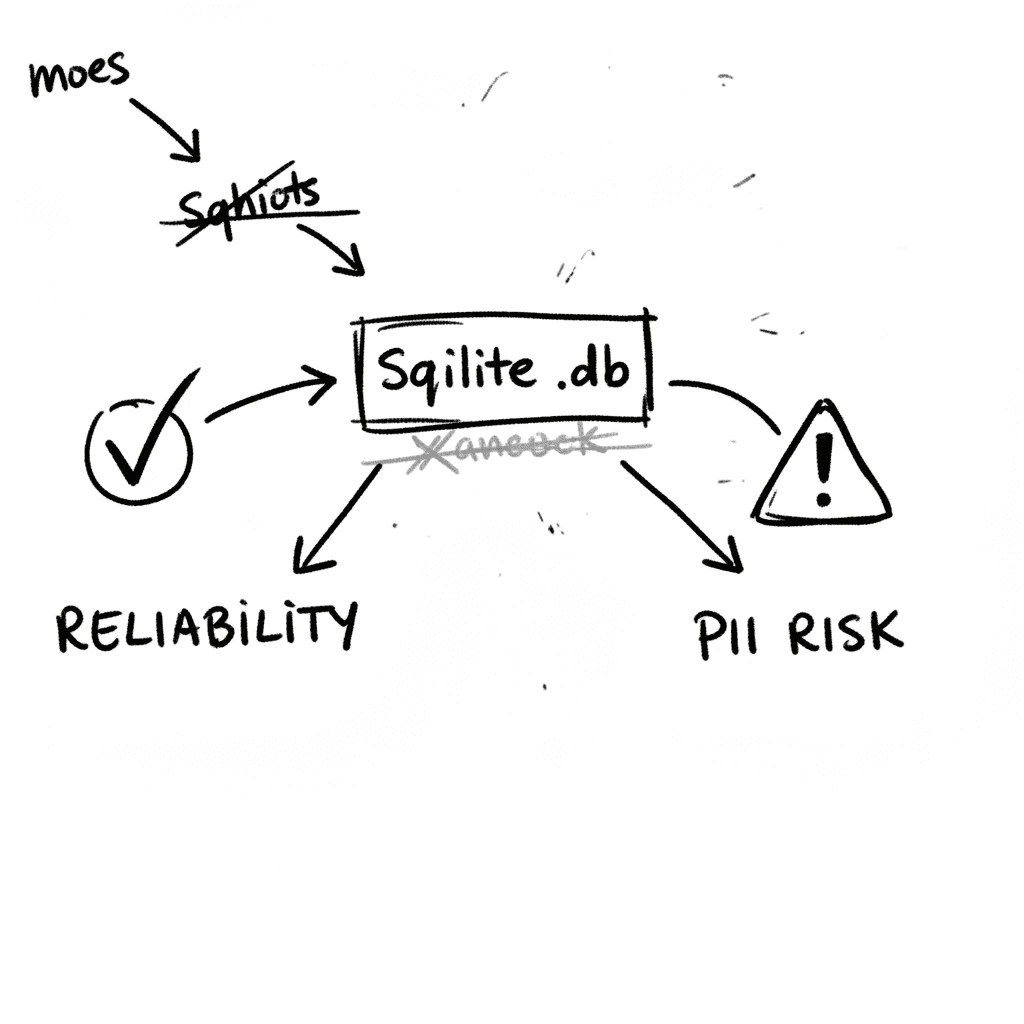

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

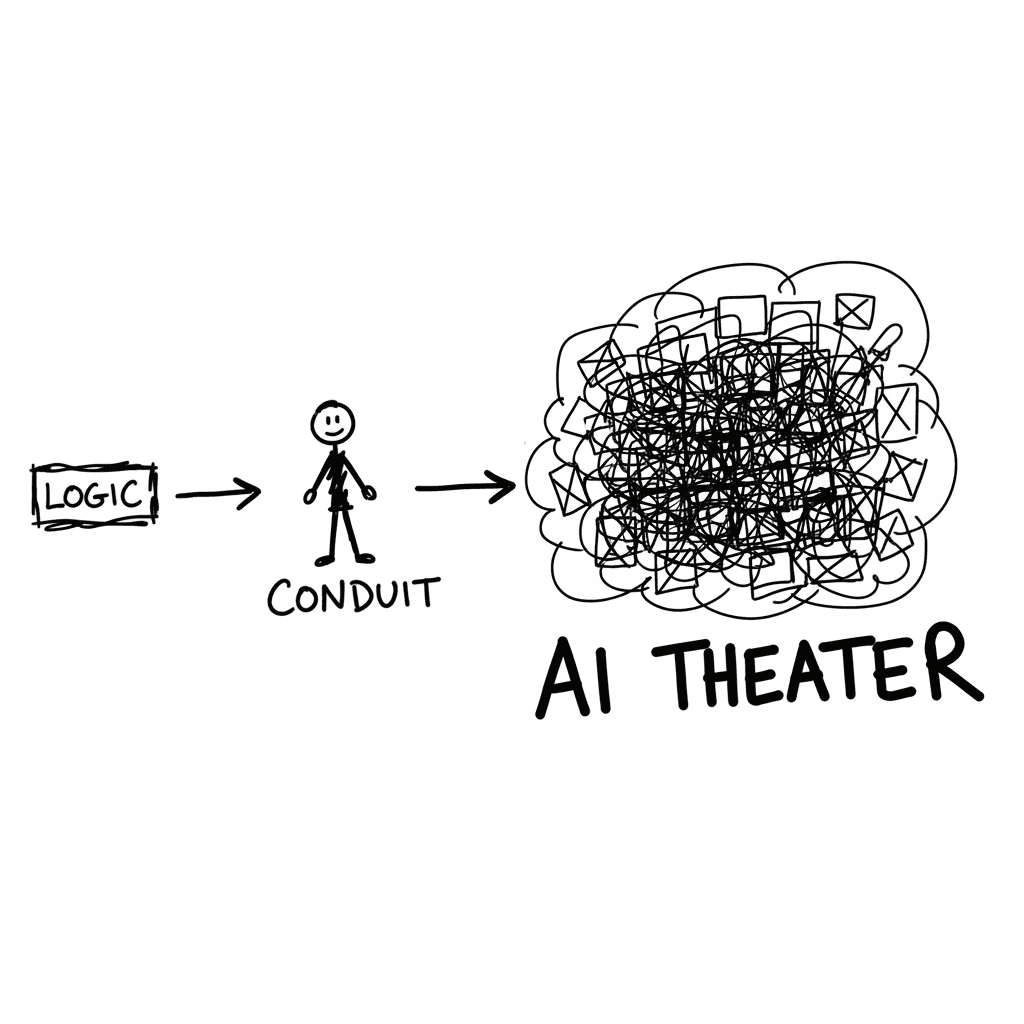

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

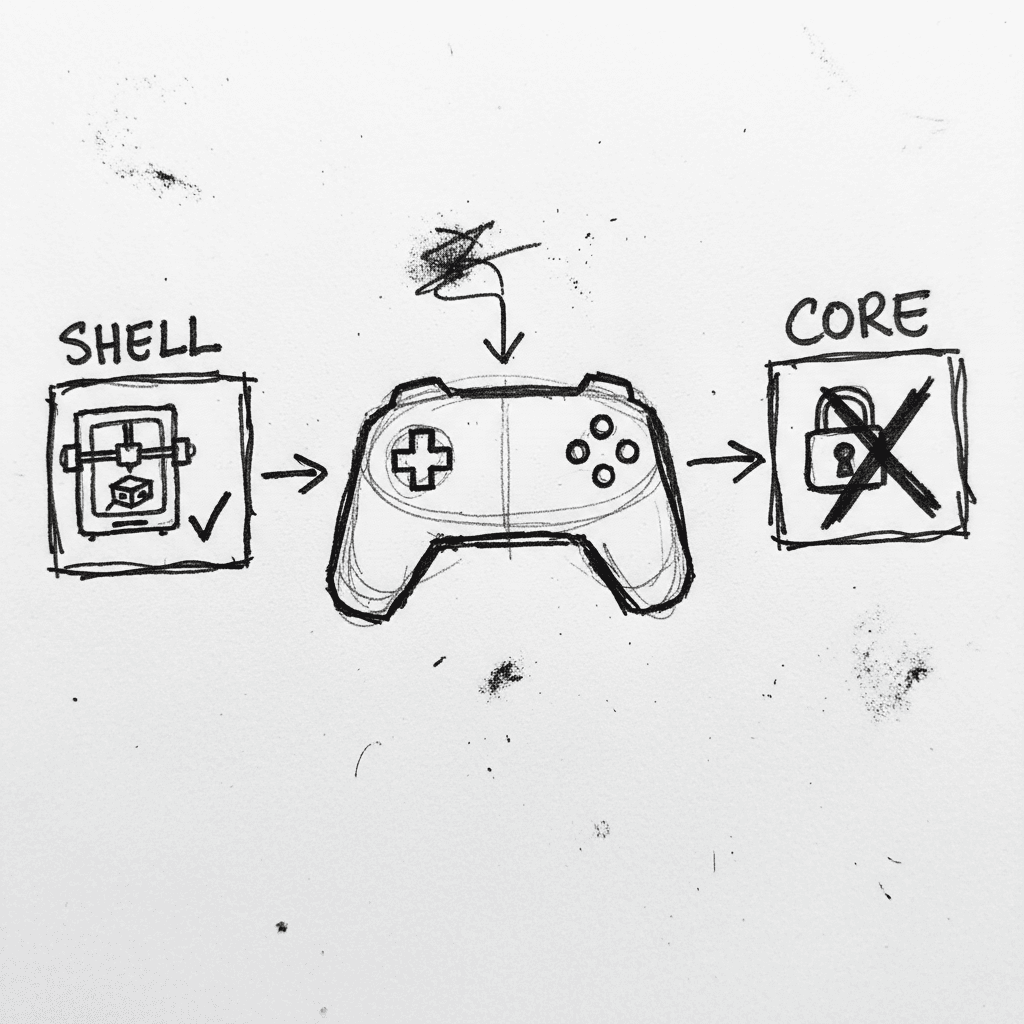

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.