Talkie-1930: Data Isolation and the 13B Historical Baseline

Talkie-1930 is a 13B parameter language model trained exclusively on a 260B token corpus of text published before 1931. Developed by Alec Radford and David Duvenaud, it aims to provide a contamination

The Pitch

Talkie-1930 is a 13B parameter language model trained exclusively on a 260B token corpus of text published before 1931. Developed by Alec Radford and David Duvenaud, it aims to provide a contamination-free baseline for research into AI generalization and historical simulation (Hugging Face).

Under the Hood

The model weights are released under an Apache 2.0 license, making the 13B checkpoint accessible for local deployment (talkie-lm/talkie-1930-13b-it). Instruction-tuning was bootstrapped using era-appropriate sources, such as 1920s etiquette manuals and encyclopedias (Hugging Face).

Using 1920s manuals for tuning is a bold choice, though I suspect most modern developers would fail the model's standards of politeness within three prompts. A 24/7 live demo currently features Claude 4 Sonnet acting as an interrogator to test the model's historical boundaries (talkie-lm.com).

However, the technical execution reveals significant cracks:

* Temporal leakage: Post-1931 knowledge, including the New Deal and the UN, has bypassed the filters (talkie-lm.com).

* Generation bugs: Users report frequent output truncation where the model stops mid-sentence (source: HN).

* Historical Bias: The output mirrors the colonialist and social prejudices of the early 20th century (Reddit).

* Architecture: The specific base transformer architecture remains unconfirmed (UsedBy Dossier).

We don't know yet if the developers will release the "anachronism classifier" used to filter the 260B token dataset. Without that tool, replicating or auditing their data pipeline for future historical models remains impossible.

Marcus's Take

Talkie-1930 is a technical curiosity that belongs in the laboratory, not in your production stack. While the data isolation is a rigorous engineering exercise, the frequent output truncation and knowledge leakage make it far too unstable for any practical application. Unless you are specifically conducting research into AI generalization or historical linguistics, skip it. It is a well-intentioned museum piece that breaks under pressure.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

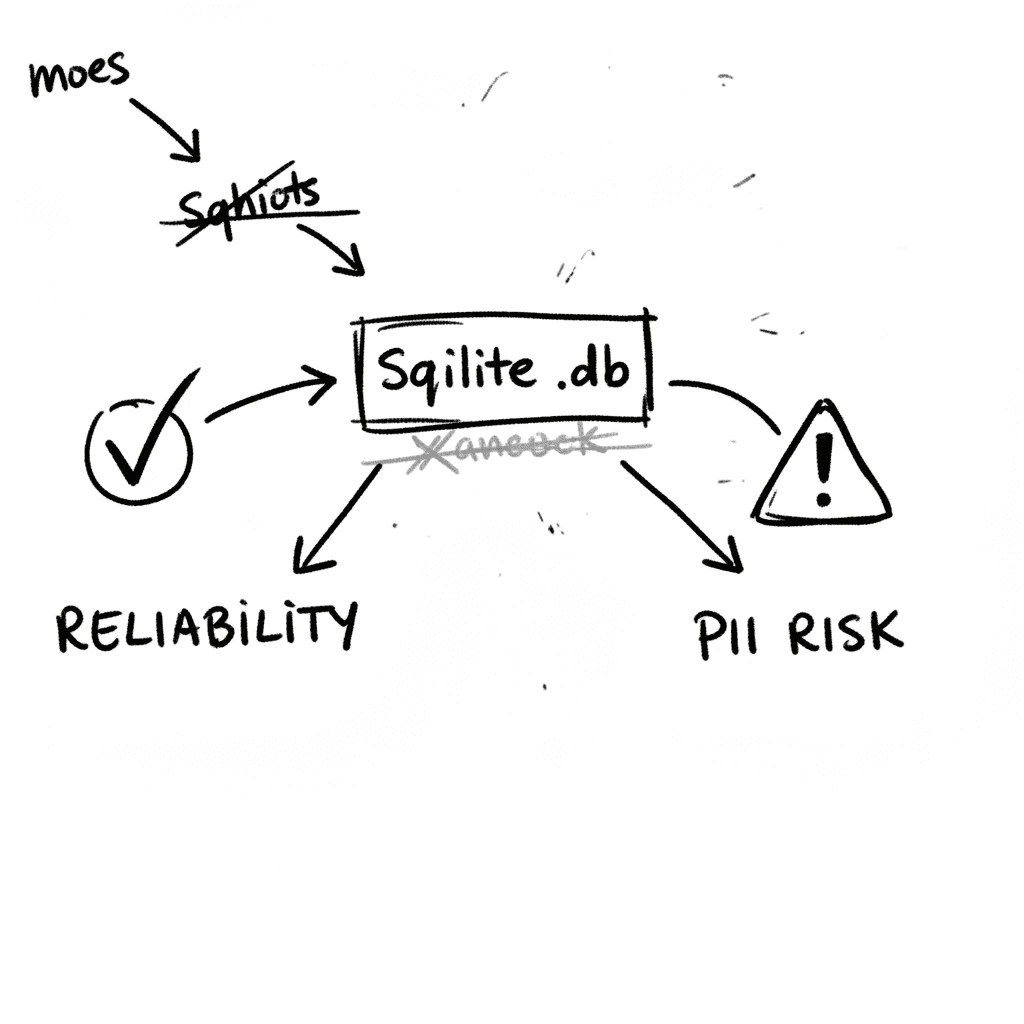

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

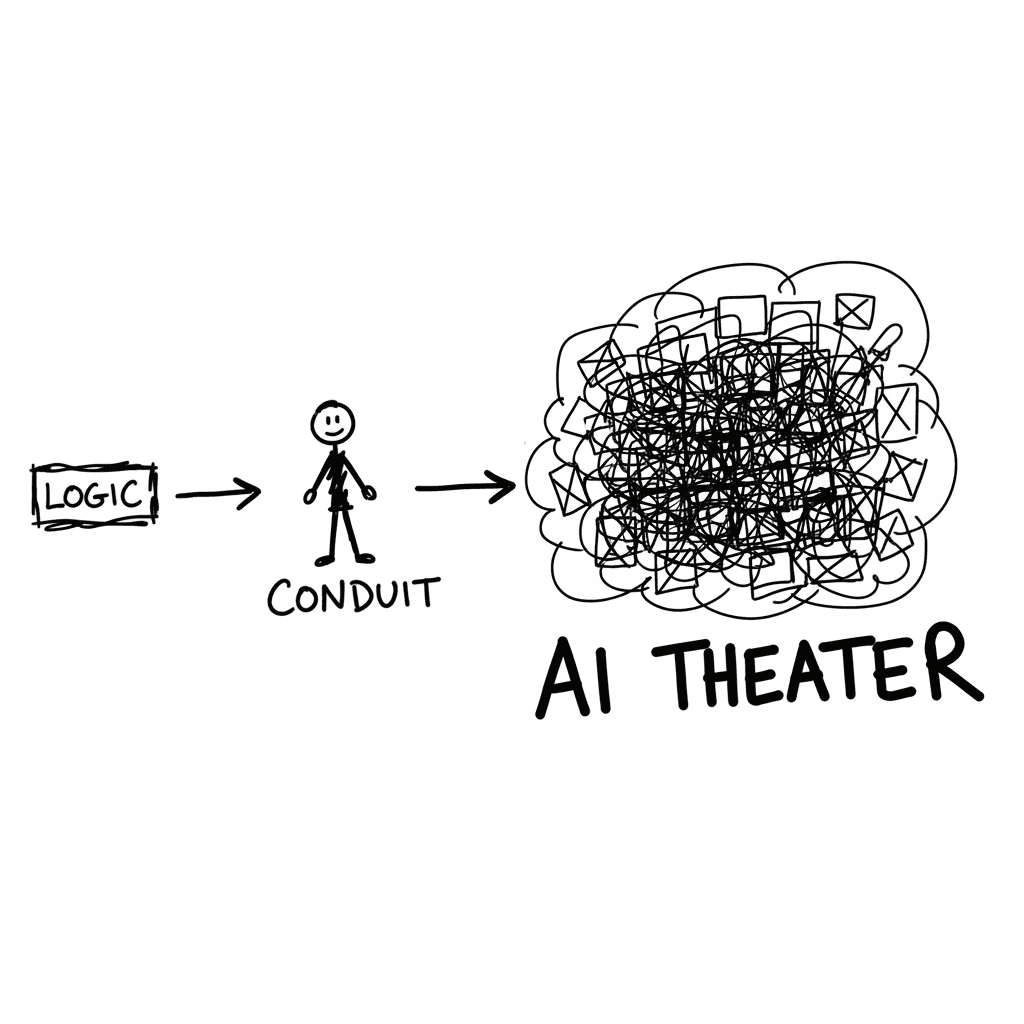

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

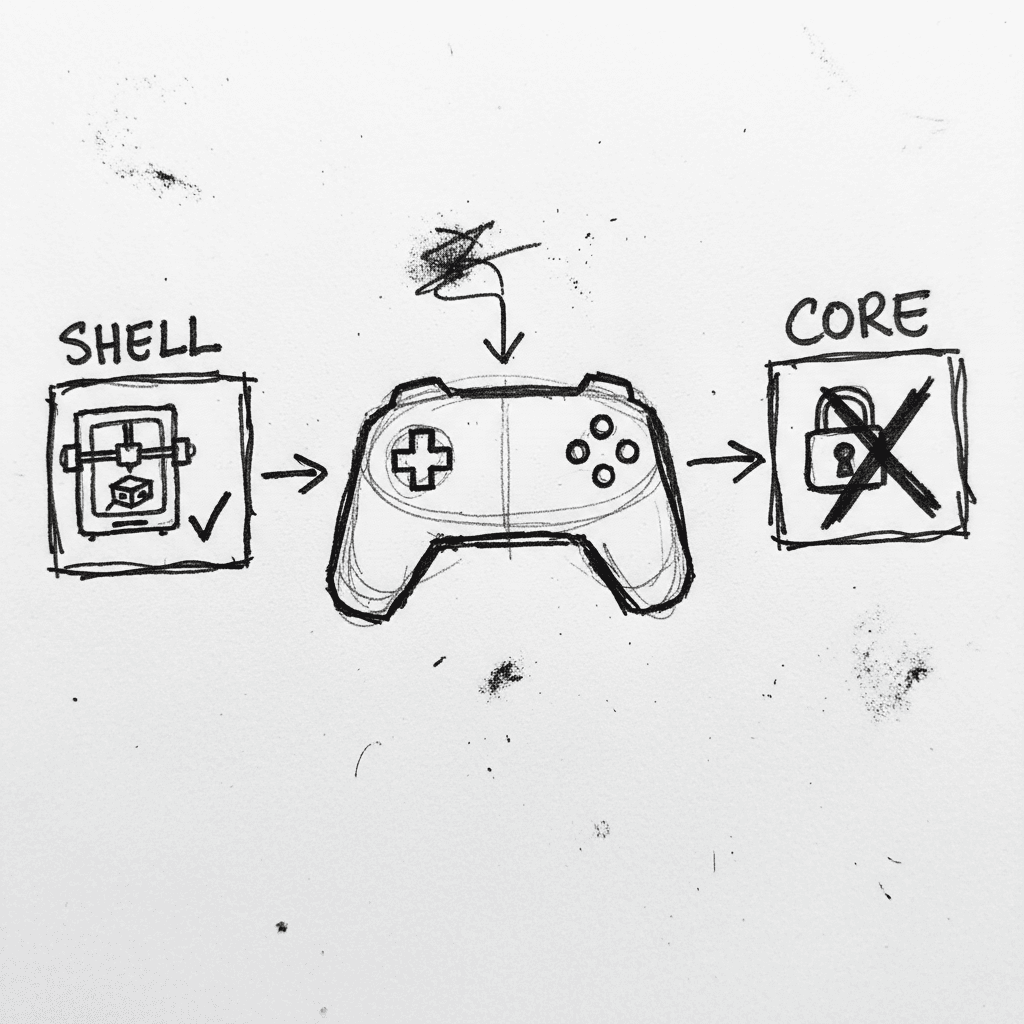

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.