Automated Hardware Matching and Model Benchmarking with whichllm

whichllm is a CLI utility that automates hardware-to-model mapping by scanning local system resources and cross-referencing HuggingFace performance data (GitHub). It aims to eliminate the manual VRAM

The Pitch

whichllm is a CLI utility that automates hardware-to-model mapping by scanning local system resources and cross-referencing HuggingFace performance data (GitHub). It aims to eliminate the manual VRAM calculations and quantization math typically required to select the optimal local model for specific infrastructure.

Under the Hood

The tool performs automated detection for NVIDIA, AMD, and Apple Silicon hardware to determine available compute and memory overhead (GitHub README). It integrates real evaluation scores using a 'confidence-based dampening' logic intended to filter out unreliable benchmark performance (GitHub Source).

For deployment, it provides a 'whichllm run' command to instantly download and initiate chat sessions with recommended models (GitHub README). The engine relies on a local cache for HuggingFace metadata to reduce latency during the selection process (GitHub Source).

However, the tool is currently plagued by critical environment issues. The Homebrew installation is failing for many users on macOS 26 due to unresolved Rosetta/ARM prefix conflicts (GitHub Issue #4624).

Furthermore, the model database is lagging behind the May 2026 landscape. Users report the tool frequently suggests legacy weights like Qwen 2.5 even when hardware is capable of running current S-tier models like Qwen 3.6 (HN Comment).

We also observe significant performance degradation in token generation speed when utilising long context windows on consumer-grade hardware (HN Comment). We don't know yet how frequently the internal benchmark metadata is synced with the current 2026 leaderboards, nor is the power-draw estimation logic for agentic use cases public.

Marcus's Take

whichllm is a personal shopper for your GPU, though one that currently insists on selling you last season’s fashions. While the hardware detection is technically sound, the reliance on an outdated model database and the broken macOS 26 installation makes it a frustration for any serious backend workflow. Play with it as a side-project if you are on Linux, but keep your production stack far away from it until they fix the weight recommendations and the Homebrew prefixing.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

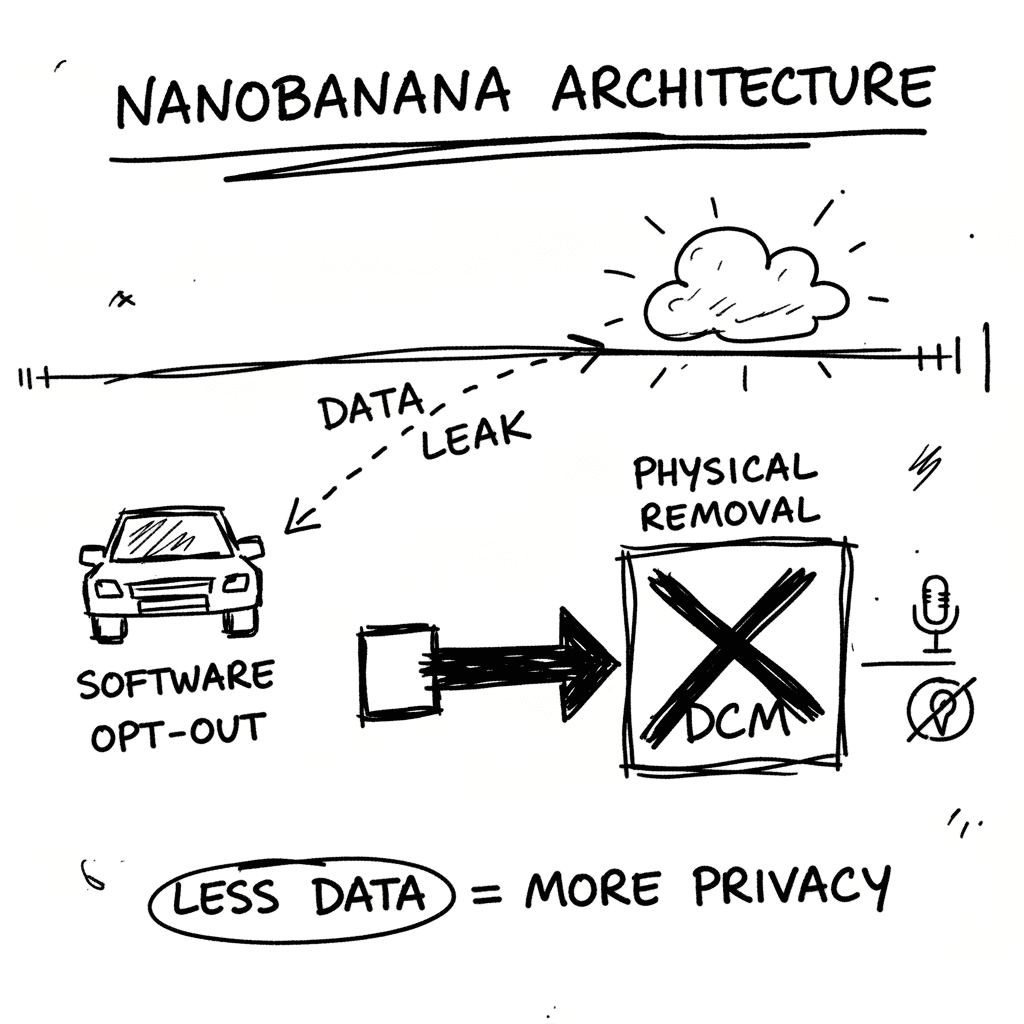

The Limits of Physical Telemetry Removal: A Case Study of the 2024 Toyota RAV4

Security engineer Arkadiy Tetelman has documented the physical disassembly required to disconnect the Data Communication Module (DCM) and GPS antenna in the 2024 Toyota RAV4 (source: arkadiyt.com). Th

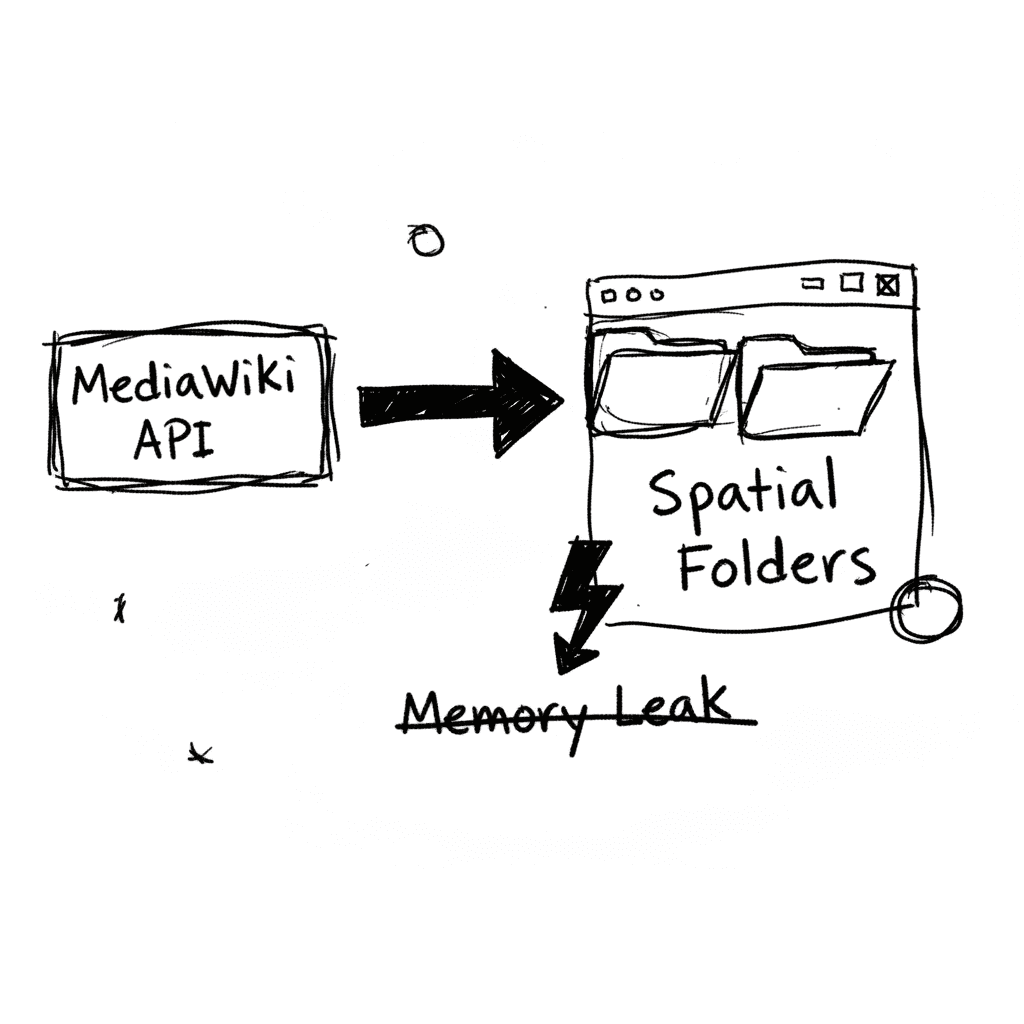

Wikipedia Explorer and the Spatial Mapping of MediaWiki APIs

Wikipedia Explorer maps the entirety of Wikipedia’s category hierarchy into a functional, spatial folder-and-file system via a high-fidelity web interface (Source: Neowin). By wrapping the MediaWiki A

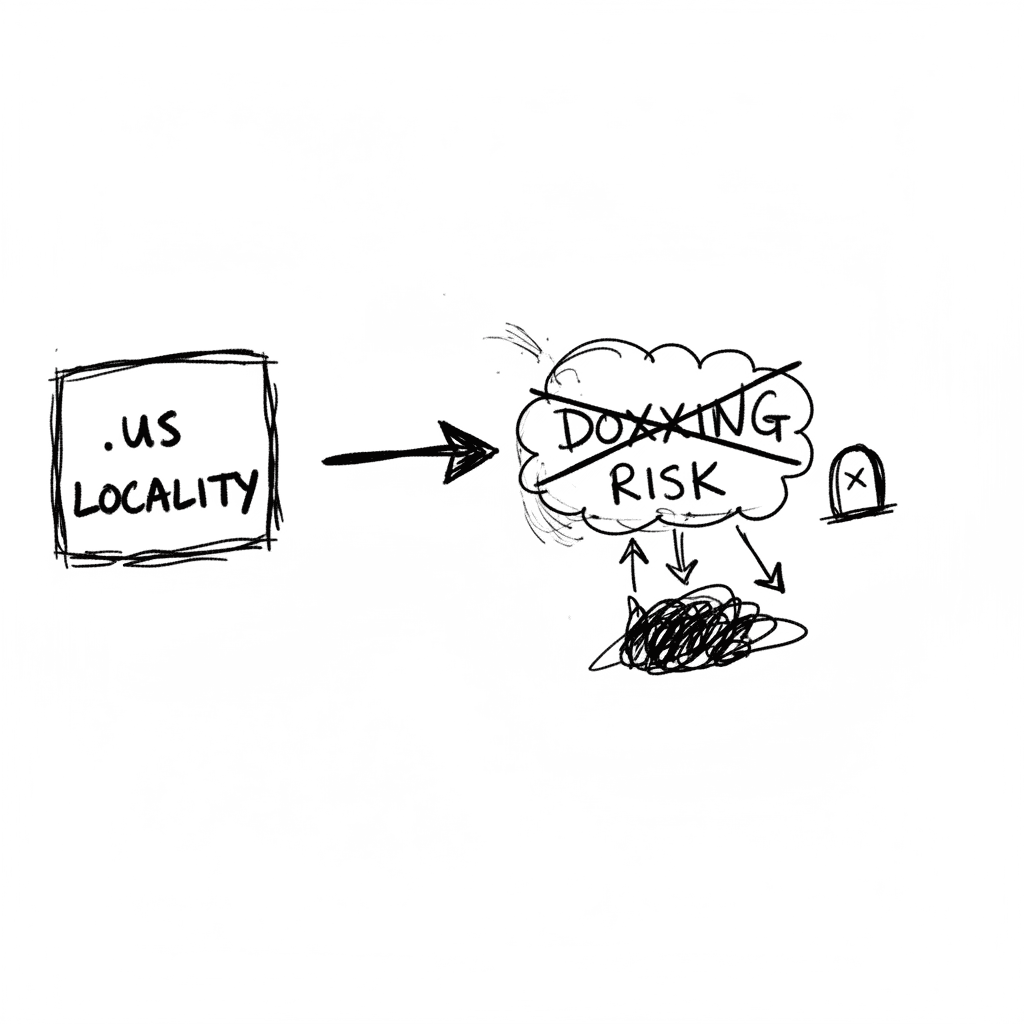

.us Locality Domains (*.city.state.us) — On Our Radar

.us Locality Domains (*.city.state.us) — On Our Radar

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.